I round up the most relevant AI-in-finance news - the deals being done, who’s rolling out what, and what’s actually working on the front lines

OpenAI closed its record $122 billion funding round this week at an $852 billion valuation…

Same week it bought a tech talk show for "low hundreds of millions." Both moves tell you something about where AI companies think the value is shifting: infrastructure on one end, narrative control on the other.

KPMG published its 2026 Global M&A Outlook with the line that stopped me: "Organisations that capture the most value will likely be those that make their deal processes legible to machines, not just to the humans who currently hold them." That's the entire DealSage thesis in 29 words.

Elsewhere this week: Anthropic accidentally leaked 512,000 lines of Claude Code's source onto GitHub and Oracle cut up to 30,000 jobs to fund its AI buildout.

But first, my take on the hidden cost of enterprise AI and why most firms haven't done the maths on what this is actually going to cost them.

In This Week’s Issue:

From The Trenches:

So what is this really going to cost?

News Digest:

KPMG 2026: making deal processes legible to machines

OpenAI's $852B week: record raise, media acquisition, and the narrative game

Other Interesting Things I’ve Read or Seen this Week:

Anthropic's Claude Code source leak, Oracle cuts 30k jobs, Challenger March data, Microsoft picks mid-class, OneStream goes private, Numos raises for AI finance agents, SpaceX files confidentially, VC money concentrates

From The Trenches

So What Is This Really Going To Cost?

A post caught my eye this week about how Anthropic prices Claude. The takeaway was how much of a steal the consumer plan is given its level of subsidy. Nothing that new in the world of VC-backed software. But it made me think about something more interesting: how the enterprise model actually works, and what I think it means for AI software pricing in general.

The consumer plan is simple: $125 to $200 a month, flat rate, effectively unlimited. Great value if you're a prosumer. But if you're an enterprise, the model flips entirely. You pay a base seat fee of $50 to $60 per month, then pure usage-based billing on top at API rates. No included token allowance. Every message, every Claude Code session, every agent task, metered.

In practice, engineering-heavy seats run $5,000 to $10,000 a month. Even non-technical users on deal teams end up at $1,000 to $2,000 per seat.

Most people using Claude or ChatGPT on a consumer plan don't realise their usage is being subsidised. The flat-rate plans are loss leaders. They build habit, drive adoption, and create the dependency that makes the enterprise conversion inevitable. Once your compliance team insists on SSO, SCIM, audit logs, and SOC 2, the consumer plan is off the table. Enterprise is the only path. And enterprise is metered.

"The consumer plan is the funnel. Enterprise is the monetisation event. And most firms haven't done the maths on the difference."

The firms I speak to still don't fully appreciate this dynamic. They trial Claude on a Max plan, get excited about the capability, and then hit a wall when procurement starts costing out the enterprise deployment. The per-seat economics look nothing like the trial. And this gap is only going to widen. It's in Anthropic's interest (and OpenAI's) to move more and more usage toward metered plans. That's where the revenue is.

The Orchestration Problem

Which brings us to the next problem: model choice is the single largest variable in your AI bill, and almost nobody is making it consciously.

Opus 4.6 is a bazooka. It's also priced like one. Running Opus to triage an inbox, classify an email, or extract a number from a document is bringing a bazooka to a knife fight.

Claude in its default mode runs Opus. You can't easily select different models for different tasks, at least not without building your own orchestration layer. Which means most teams are paying frontier prices for tasks that a model a tenth of the cost could handle just as well.

The firms that will keep their AI spend under control are the ones building orchestration into their stack from day one. Route the hard reasoning to Opus or GPT-5. Route the extraction, classification, and routing to Haiku or Sonnet or a locally-hosted open-weight model.

On a call with a PE firm this week I was walking through how we do inbox triage at DealSage. We don't run Opus on it. We run a lightweight internal model, hosted separately, for two reasons. First, token costs: if you ran Opus on every inbound email at firm scale, you'd be at thousands of dollars a month on that one workflow alone. Second, security: when you use a lightweight self-hosted model, the data never leaves your environment.

The Flattening Curve

There's a second force working in the orchestrator's favour: the capability gap between frontier and mid-tier models is narrowing. I wrote about this back in March. The model doesn't matter as much as people think it does.

This week, Microsoft's AI chief Mustafa Suleyman told the FT that Microsoft is "not able to build models in the very largest scale yet" and is deliberately "competing in the mid-class range." He described mid-class as "optimal in terms of balancing cost, performance, quality and large-scale usage."

This from the company that owns Azure and is contractually entangled with OpenAI. It makes me think we're going to see more model providers offering different classes optimised for different workloads. Think of it like cars. You don't drive a Range Rover to pick up milk. You don't take a Fiat 500 on a 400-mile motorway trip. You pick the vehicle for the job. Same logic applies to models. Opus is your long-range cruiser for complex reasoning. GPT 5.4 is your daily runner for extraction and classification. Most firms are driving the Range Rover everywhere and wondering why the fuel bill is so high.

For the practical workloads most finance teams actually run, the incremental gain from frontier over mid-tier is shrinking by the month. That's what makes orchestration matter more over time, not less. Paying Opus prices for Opus-class tasks will always make sense. Paying Opus prices for Sonnet-class tasks is the mistake that's going to define a lot of 2026 AI budgets.

The Local Inference Question

One idea gaining increasing traction is running models locally on self-hosted GPUs.

At the low end it already works. Smaller open-weight models (Llama, Mistral, Qwen, Phi) run comfortably on a single high-end GPU or even consumer hardware. For classification, extraction, summarisation, and structured parsing, local inference is genuinely viable today. Companies with strict privacy requirements in defence, healthcare, and legal are already doing this at scale.

Running something comparable to Opus locally is a different story. You're looking at multiple H100s ($30 to $40k each), proper networking, and an engineer to maintain it. A reasonable-throughput frontier cluster is $500k to $2M+ before power, cooling, or ops. That only pencils out at extremely high volume. But model distillation means what required 70B parameters two years ago can often be done at 7 to 13B today. And the hardware options are expanding.

Reuters reported this week that DeepSeek's forthcoming V4 model, a trillion-parameter architecture with a million-token context window, will run entirely on Huawei's Ascend 950PR chips. No Nvidia. Alibaba, ByteDance and Tencent have already placed bulk orders.

The realistic middle ground is still tiered: smaller fine-tuned models locally or in private cloud for high-volume lower-complexity tasks, frontier APIs for the hard stuff. The question for the next 18 months is how fast the "frontier" tier commoditises.

What This Means

Usage-based pricing is where this ultimately goes. There's no real way around that. The consumer flat-rate model is a subsidy that won't last forever, and Anthropic and OpenAI are both incentivised to push more and more usage onto metered enterprise plans. That's the business model.

If that's the case, then managing which models you're using, and when, becomes increasingly important. At DealSage, we get asked a lot which models we use. It's an important question because the models are genuinely better at different things. But one underappreciated part of being model agnostic is the routing from a cost and security perspective too. As the models converge in capability, that's where the differentiation shifts. Chasing the latest benchmark matters less than knowing when to use what, and keeping the bill under control while you do it.

The firms that figure this out in 2026 will have a structural cost advantage over the ones still running everything through a single frontier API and hoping the invoice is manageable. And right now, most firms are in the second camp.

News Digest

KPMG 2026: Making Deal Processes Legible To Machines

KPMG dropped its 2026 Global M&A Outlook on March 24, and most of it covers the ground you'd expect: carve-outs, diverging risk appetites, execution discipline as a source of advantage. The section on AI is the one worth reading twice.

The framing is that the autonomous task horizon is expanding rapidly. Tasks that required continuous human oversight 18 months ago can now be completed with limited intervention. KPMG expects multi-day autonomous execution on complex analytical tasks within the next 12 months.

But the most interesting passage is about where the bottleneck actually sits.

The details:

56% of surveyed organisations are deploying agentic AI in due diligence and valuation, 53% in deal sourcing and strategy, 45% in post-merger integration

Report explicitly names the constraint: "The AI itself is rarely the bottleneck. The more complex challenge is codifying the unwritten rules, institutional shortcuts and tacit knowledge that experienced practitioners carry in their heads"

KPMG's conclusion: "Organisations that capture the most value will likely be those that make their deal processes legible to machines, not just to the humans who currently hold them"

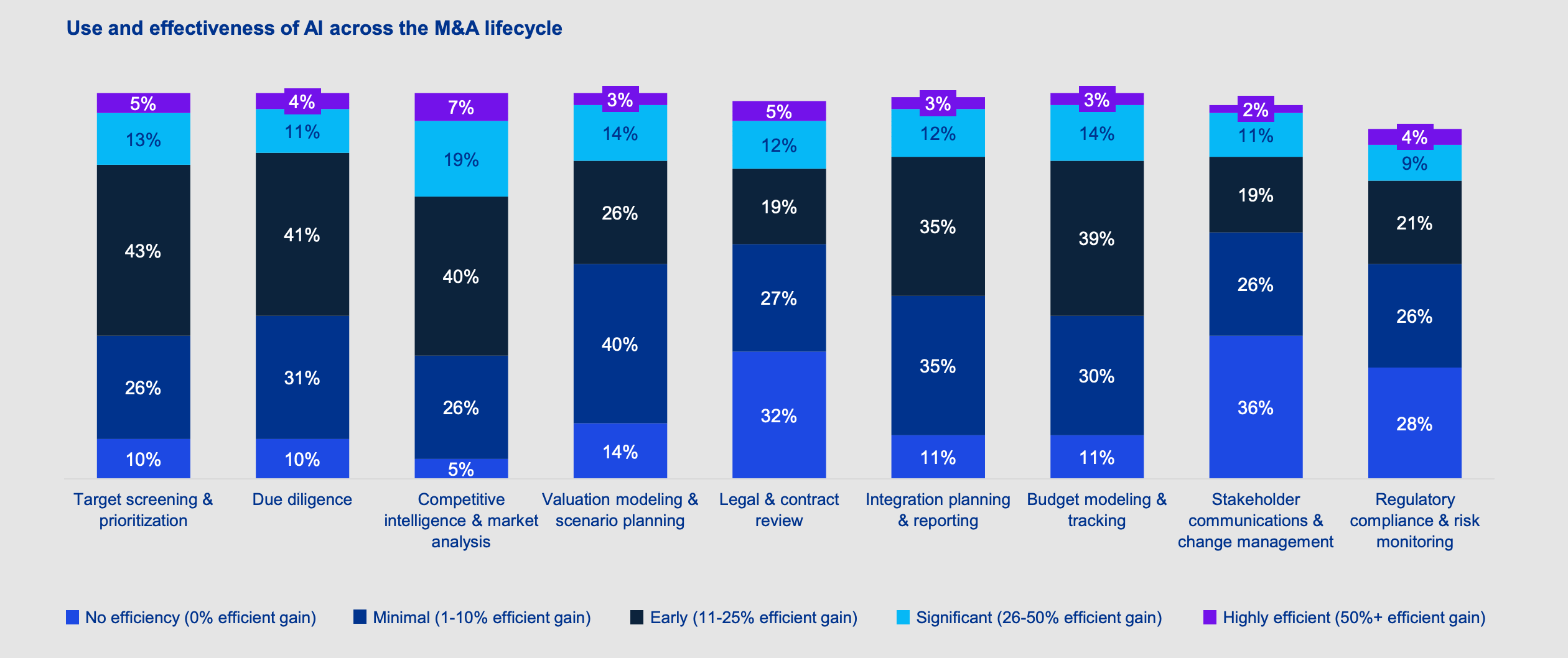

59% report AI providing greater than 10% efficiency gain in competitive intelligence and market analysis

700 PE and corporate dealmakers surveyed across 20 countries

Why it matters: KPMG just named the real problem with AI in M&A in a way the industry has been dancing around. The models aren't the hard bit. The hard bit is getting unstructured documents, emails, thoughts and whatever else into structured form.

My take: This is exactly the bet we're making at DealSage, and it's the first time I've seen a major advisory firm say it this plainly in print. The firms winning with AI aren't the ones buying the most expensive tools. They're the ones doing the hard, unglamorous work of creating a log of every decision made by agents, humans, and otherwise. Investment memos, call notes, model assumptions, approval chains. All of it structured, tagged, and stored so that machines can read it and humans can audit it. The routine logging of this information is what makes AI actually useful over time. Everyone else is running a very sophisticated chatbot over a very messy file system and wondering why the output is so shallow.

OpenAI's $852B Week: Record Raise, Media Acquisition, and the Narrative Game

OpenAI had quite a week. On March 31, it closed a $122 billion funding round at an $852 billion valuation, the largest private raise in history. Three days later, it acquired TBPN, a tech talk show, for a price reportedly in the "low hundreds of millions." Two very different moves that tell the same story: OpenAI is building for the IPO.

The funding round was anchored by Amazon ($50B), Nvidia ($30B), and SoftBank ($30B). For the first time, OpenAI raised $3 billion from individual investors through bank channels. The company says it's generating $2 billion in monthly revenue. CFO Sarah Friar said the financing "blows out of the water even the largest IPO that's ever been done."

Then the TBPN deal. If you haven't heard of it, TBPN is a three-hour daily live show on YouTube and X hosted by two former tech founders. It's become the closest thing Silicon Valley has to a trade floor: founders, investors, and operators talking candidly. The show reports directly to OpenAI's chief political operative Chris Lehane. Editorial independence has been promised.

The details:

$122B round closed March 31 at $852B valuation (Amazon $50B, Nvidia $30B, SoftBank $30B)

OpenAI generating $2B/month revenue, 900M+ weekly ChatGPT users

Enterprise now 40%+ of revenue, on track for parity with consumer by year-end

TBPN acquired April 2, terms undisclosed (FT reports "low hundreds of millions")

TBPN on track for $30M+ revenue this year, reports to Chris Lehane

OpenAI's Sora video app discontinued, focus shifting to "AI superapp"

Why it matters: The funding round is pre-IPO positioning. The TBPN acquisition is narrative infrastructure. OpenAI is discovering that in AI, the story you tell matters almost as much as the model you build.

My take: Three observations. First: the TBPN deal reports to Lehane (OpenAI's political operative, not its marketing chief). That tells you what this acquisition actually is. It's a policy and narrative instrument, not a media play. Second: if OpenAI thinks a live daily show is worth hundreds of millions, we're entering a phase where AI companies treat distribution and attention as core strategic assets. Expect Anthropic, Google, and Meta to follow. Third, and this is the bit that's genuinely relevant to finance: social-first media is quietly becoming how technical products get sold into institutional buyers. PE firms and banks are making procurement decisions influenced by what their partners watched on X that morning.

Other Interesting Things I’ve Read of Seen This Week:

Anthropic accidentally leaks the source code for Claude Code (Mar 31) - A routine release pushed 512,000 lines of Claude Code's internal source onto GitHub. Anthropic called it a "release packaging issue caused by human error, not a security breach," which is also how you describe setting your kitchen on fire while cooking pasta. Expect Claude Code clones.

Oracle lays off up to 30,000 to fund AI data centre buildout (Apr 1) - $50B in debt and equity this year to build for Nvidia, OpenAI, Meta and xAI. The cash has to come from somewhere, and this week it came from 20,000 to 30,000 employees. Sell SaaS, buy GPUs, fire the salespeople who sold the SaaS.

Challenger: March job cuts up 25% MoM, AI the leading cited cause (Apr 3) - 60,620 US jobs cut in March. For the first time, AI was the top named reason. The Oracle number alone would have moved this gauge.

Microsoft launches "mid-class" AI model as compute limits bite (Apr 3) - Mustafa Suleyman admits Microsoft can't yet build frontier-scale models and is targeting mid-class as "optimal" on cost, performance and quality. Even the company that owns Azure is GPU-constrained. Let that sink in.

Numos raises $4.25M GC-led seed for AI finance agents (Apr 2) - General Catalyst is betting on AI agents for corporate finance teams. Probably the first of a dozen of these you'll see raise in 2026. The survivors will be the ones who figured out orchestration costs early.

SpaceX files confidentially for IPO (Apr 1) - Potentially the largest listing in history. Worth noting alongside the earlier xAI absorption, which effectively means the SpaceX S-1 will carry an AI strategy.

VC dollars concentrate in fewer, larger deals (Apr 3) - PitchBook Q1 data shows AI and software taking the majority of funding via a handful of mega-rounds. Early-stage founders outside the AI narrative are going to feel this all year.

Acquisition Intelligence is a weekly newsletter on AI in M&A for finance professionals, private equity investors, investment bankers, corp dev teams, and deal-makers.

For questions, feedback, or to share what you're seeing in the market, reply to this email.

P.S. I'm Harry, co-founder of DealSage. We're building an AI-native deal intelligence platform to help professionals turn their institutional knowledge into better decisions. If you're curious what we're up to, check out dealsage.io or just reply here